Database Indexing Guide - Algorithms, Implementation, and Real-World Trade-offs

Master database indexing from fundamentals to advanced algorithms. Learn how B-trees, hash indexes, and full-text search work under the hood, with practical SQL and ORM examples for production systems.

#Introduction

Every database engineer has experienced it: a query that should take milliseconds crawls at seconds. The culprit? Missing or poorly designed indexes.

Indexing is one of the most powerful yet misunderstood tools in database optimization. It's the difference between scanning millions of rows and pinpointing exactly what you need. Yet many developers treat indexes as an afterthought, adding them only when performance becomes critical.

In production systems, indexes aren't optional—they're foundational. A well-indexed database can handle 10x more traffic with the same hardware. A poorly indexed one will collapse under load regardless of how much you scale.

This guide walks you through everything: what indexes are, why they matter, how different algorithms work internally, and how to implement them correctly in your applications.

#What is Database Indexing?

An index is a data structure that enables faster data retrieval. Think of it like a book's index—instead of reading every page to find mentions of "concurrency," you flip to the index, find the page numbers, and jump directly there.

Without indexes, databases perform full table scans. With indexes, they navigate directly to relevant data.

#The Core Problem Indexes Solve

Consider a table with 10 million user records. A query like:

SELECT * FROM users WHERE email = 'user@example.com'Without an index, the database reads all 10 million rows sequentially until it finds the match. With an index on the email column, it locates the record in milliseconds.

The trade-off? Indexes consume disk space and slow down writes (INSERT, UPDATE, DELETE) because the index must be updated alongside the table. This is why indexing strategy matters—you're optimizing for your access patterns.

#Where Indexes Are Used

Indexes accelerate:

- WHERE clauses - Filtering rows by specific conditions

- JOIN conditions - Matching rows across tables

- ORDER BY - Sorting results efficiently

- GROUP BY - Aggregating data

- DISTINCT - Finding unique values

- Range queries - Finding values between bounds

They don't help with:

- SELECT * - If you need all columns anyway

- Full table scans - When filtering returns most rows

- Unindexed columns in WHERE - The index must match your query pattern

#Pros and Cons of Indexing

#Advantages

- Dramatic query speedup - Often 100x-1000x faster for selective queries

- Reduced CPU usage - Less data processing means lower CPU load

- Better scalability - Handle more concurrent queries

- Predictable performance - Consistent response times

#Disadvantages

- Disk space overhead - Indexes duplicate data

- Write performance penalty - Every INSERT/UPDATE/DELETE updates indexes

- Maintenance cost - Indexes fragment over time, requiring rebuilds

- Query planner confusion - Poor indexes can mislead the optimizer

- Memory pressure - Large indexes compete for cache space

Tip

The best index is one you don't create. Before indexing, verify the query actually needs optimization. Use EXPLAIN ANALYZE to confirm the index helps.

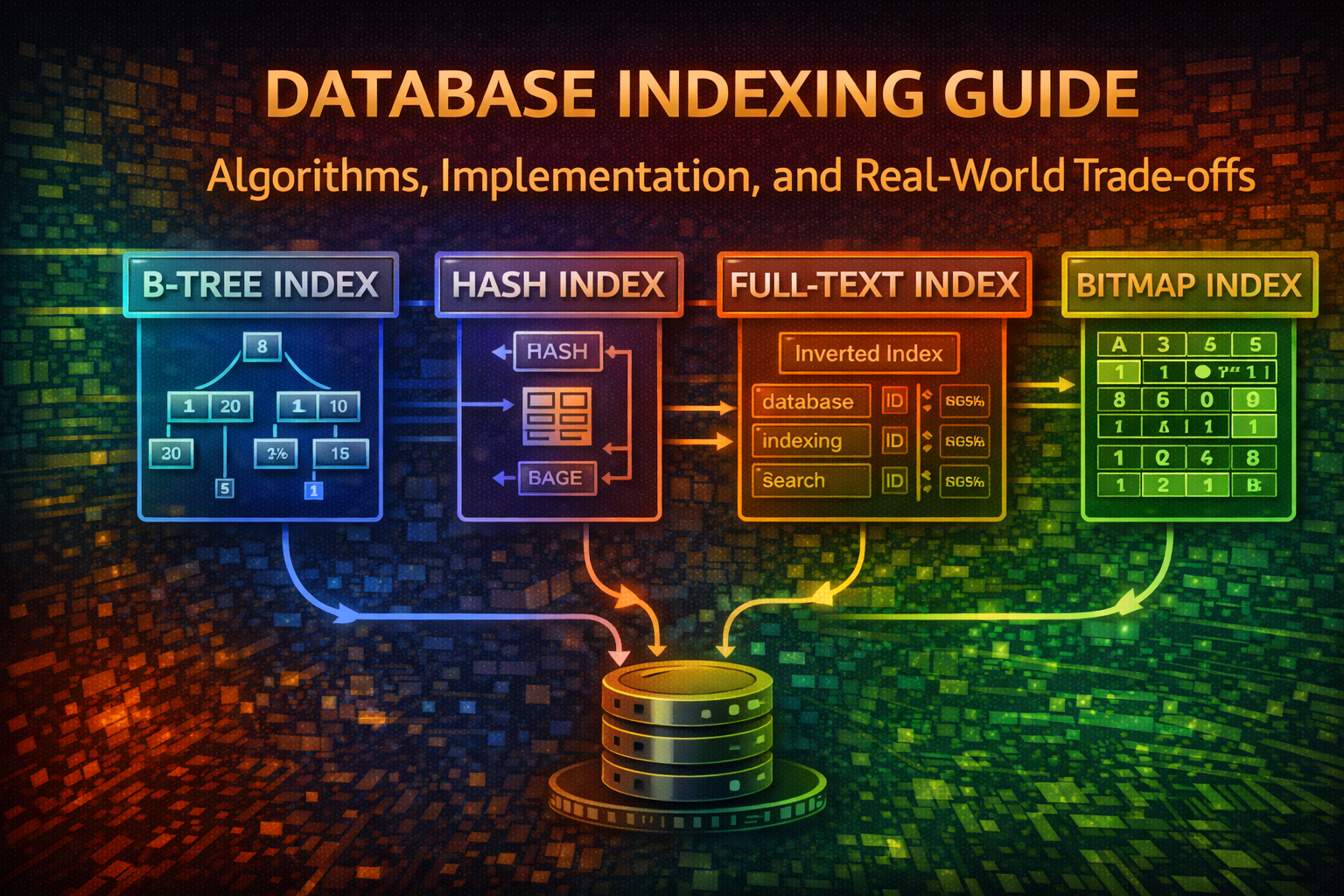

#Index Types and Algorithms

Different index types optimize for different access patterns. Let's explore the most common ones.

#B-Tree Indexes

B-trees are the default index type in most databases (PostgreSQL, MySQL, SQL Server). They're balanced, self-organizing trees optimized for disk-based storage.

#How B-Trees Work

A B-tree maintains sorted data across multiple levels:

[40, 80]

/ | \

[10,30] [50,70] [90,100]

/ | \ / | \ / | \

1 15 35 45 60 75 85 95 110Each node (called a page) holds multiple keys and pointers to child nodes. When you search for a value:

- Start at the root

- Compare your target with keys in the node

- Follow the appropriate pointer to the child node

- Repeat until you find the value or reach a leaf

Why B-trees excel:

- Balanced - All leaf nodes are at the same depth, guaranteeing O(log n) performance

- Disk-friendly - Nodes align with disk pages, minimizing I/O operations

- Range queries - Leaf nodes are linked, enabling efficient sequential scans

- Self-balancing - Insertions and deletions maintain balance automatically

#B-Tree Example in SQL

CREATE INDEX idx_users_email ON users(email);This creates a B-tree index on the email column. PostgreSQL and MySQL use B-trees by default.

#B-Tree in ORMs

model User {

id Int @id @default(autoincrement())

email String @unique

name String

@@index([email])

}@Entity()

export class User {

@PrimaryGeneratedColumn()

id: number;

@Column()

@Index()

email: string;

@Column()

name: string;

}#Hash Indexes

Hash indexes use a hash function to map values to bucket locations. They're extremely fast for equality lookups but don't support range queries.

#How Hash Indexes Work

Hash Function: hash(value) → bucket_id

email: "alice@example.com" → hash() → bucket 42 → [row_id: 1001]

email: "bob@example.com" → hash() → bucket 15 → [row_id: 2003]

email: "charlie@example.com" → hash() → bucket 42 → [row_id: 3005]When multiple values hash to the same bucket (collision), they're stored in a chain or overflow area.

Characteristics:

- O(1) average case - Direct bucket lookup

- Equality only - Can't do range queries like

WHERE age > 30 - Hash collisions - Performance degrades with poor hash functions

- Not ordered - Can't use for ORDER BY or range scans

#Hash Index Example

CREATE INDEX idx_users_email ON users USING HASH (email);Warning

Hash indexes in PostgreSQL are not crash-safe and rarely recommended. Use B-trees instead unless you have specific performance requirements.

#Full-Text Search Indexes

Full-text indexes optimize searching within text content. They tokenize text, remove stop words, and build inverted indexes for fast keyword matching.

#How Full-Text Search Works

Document: "The quick brown fox jumps over the lazy dog"

Tokenization:

[quick, brown, fox, jumps, lazy, dog]

Inverted Index:

quick → [doc_id: 1]

brown → [doc_id: 1]

fox → [doc_id: 1]

jumps → [doc_id: 1]

lazy → [doc_id: 1]

dog → [doc_id: 1]

Query: "quick fox"

→ Find docs containing "quick": [1]

→ Find docs containing "fox": [1]

→ Intersection: [1] ✓#Full-Text Search in SQL

CREATE TABLE articles (

id SERIAL PRIMARY KEY,

title TEXT,

content TEXT,

search_vector tsvector GENERATED ALWAYS AS (

to_tsvector('english', title || ' ' || content)

) STORED

);

CREATE INDEX idx_articles_search ON articles USING GIN (search_vector);

SELECT * FROM articles

WHERE search_vector @@ to_tsquery('english', 'database & indexing');CREATE TABLE articles (

id INT PRIMARY KEY AUTO_INCREMENT,

title VARCHAR(255),

content LONGTEXT,

FULLTEXT INDEX idx_search (title, content)

);

SELECT * FROM articles

WHERE MATCH(title, content) AGAINST('database indexing' IN BOOLEAN MODE);#Full-Text Search in ORMs

model Article {

id Int @id @default(autoincrement())

title String

content String

@@fulltext([title, content])

}

// Query

const results = await prisma.article.findMany({

where: {

OR: [

{ title: { search: 'database' } },

{ content: { search: 'indexing' } }

]

}

});#Bitmap Indexes

Bitmap indexes are efficient for columns with low cardinality (few distinct values). They use bit arrays where each bit represents a row.

#How Bitmap Indexes Work

Column: status (values: active, inactive, pending)

Bitmap for "active":

Row 1: 1

Row 2: 0

Row 3: 1

Row 4: 0

Row 5: 1

Bitmap for "inactive":

Row 1: 0

Row 2: 1

Row 3: 0

Row 4: 0

Row 5: 0

Bitmap for "pending":

Row 1: 0

Row 2: 0

Row 3: 0

Row 4: 1

Row 5: 0Queries use bitwise operations for fast filtering:

-- Find active OR pending users

-- Bitwise OR: [1,0,1,0,1] | [0,0,0,1,0] = [1,0,1,1,1]

SELECT * FROM users WHERE status IN ('active', 'pending');When to use:

- Low cardinality columns (< 100 distinct values)

- Data warehouse queries

- Columns like status, gender, country

Limitation: Not ideal for high-cardinality columns (many distinct values) because you'd need too many bitmaps.

#Partial Indexes

Partial indexes index only rows matching a condition, reducing size and improving performance.

-- Only index active users

CREATE INDEX idx_active_users ON users(email)

WHERE status = 'active';

-- Query uses the index

SELECT * FROM users WHERE status = 'active' AND email = 'user@example.com';This is faster than indexing all users because:

- Smaller index = faster searches

- Fewer updates (inactive users don't affect the index)

- Better cache locality

#Composite Indexes

Composite indexes span multiple columns, optimizing queries that filter on multiple fields.

CREATE INDEX idx_users_country_city ON users(country, city);

-- This query uses the index efficiently

SELECT * FROM users WHERE country = 'US' AND city = 'New York';

-- This also uses the index (country is first)

SELECT * FROM users WHERE country = 'US';

-- This does NOT use the index (city is first, violates column order)

SELECT * FROM users WHERE city = 'New York';Key principle: Composite indexes follow the leftmost prefix rule. Queries must use columns from left to right.

#Index Data Structures Deep Dive

#B+ Trees (Variant of B-Trees)

B+ trees are optimized for range queries. Unlike B-trees, all data is stored in leaf nodes, and internal nodes contain only keys for navigation.

Internal nodes:

[40, 80]

/ | \

[10,30] [50,70] [90,100]

Leaf nodes (linked):

[1,15,35] ↔ [45,60,75] ↔ [85,95,110]Advantages:

- Leaf nodes form a linked list, enabling efficient sequential scans

- Internal nodes are smaller, fitting more keys per page

- Better for range queries like

WHERE age BETWEEN 20 AND 40

Most modern databases (PostgreSQL, MySQL, SQLite) use B+ trees internally.

#LSM Trees (Log-Structured Merge Trees)

LSM trees optimize write-heavy workloads by batching writes in memory before flushing to disk.

Write path:

1. Write to in-memory buffer (MemTable)

2. When full, flush to disk as immutable SSTable

3. Periodically merge SSTables

Read path:

1. Check MemTable

2. Check recent SSTables

3. Check older SSTablesUsed by: RocksDB, LevelDB, Cassandra, HBase

Trade-off: Faster writes, slower reads (must check multiple levels)

#Hash Tables

Simple hash tables map keys directly to values. Used for in-memory caches and some database indexes.

Hash function: hash(key) % table_size

key: "user:123" → hash() % 1000 → bucket 456 → valuePros: O(1) average lookup Cons: No range queries, collision handling overhead

#Practical Implementation Examples

#SQL Index Creation

-- Single column index

CREATE INDEX idx_users_email ON users(email);

-- Unique index (enforces uniqueness)

CREATE UNIQUE INDEX idx_users_email_unique ON users(email);

-- Composite index

CREATE INDEX idx_orders_user_date ON orders(user_id, created_at);

-- Partial index (only active users)

CREATE INDEX idx_active_users ON users(email) WHERE status = 'active';

-- Descending index (for ORDER BY DESC)

CREATE INDEX idx_posts_date_desc ON posts(created_at DESC);

-- Expression index (index computed values)

CREATE INDEX idx_users_email_lower ON users(LOWER(email));

-- Full-text index (PostgreSQL)

CREATE INDEX idx_articles_search ON articles USING GIN (

to_tsvector('english', title || ' ' || content)

);#ORM Index Examples

model User {

id Int @id @default(autoincrement())

email String @unique

name String

status String

createdAt DateTime @default(now())

// Single column index

@@index([email])

// Composite index

@@index([status, createdAt])

// Full-text search

@@fulltext([name])

}

model Order {

id Int @id @default(autoincrement())

userId Int

amount Float

createdAt DateTime @default(now())

user User @relation(fields: [userId], references: [id])

// Foreign key index (usually automatic)

@@index([userId])

// Composite for common queries

@@index([userId, createdAt])

}import { Entity, PrimaryGeneratedColumn, Column, Index } from 'typeorm';

@Entity()

@Index(['email'])

@Index(['status', 'createdAt'])

export class User {

@PrimaryGeneratedColumn()

id: number;

@Column()

email: string;

@Column()

name: string;

@Column()

status: string;

@Column()

createdAt: Date;

}

@Entity()

@Index(['userId', 'createdAt'])

export class Order {

@PrimaryGeneratedColumn()

id: number;

@Column()

userId: number;

@Column()

amount: number;

@Column()

createdAt: Date;

}const User = sequelize.define('User', {

email: {

type: DataTypes.STRING,

allowNull: false,

index: true

},

name: DataTypes.STRING,

status: DataTypes.STRING

}, {

indexes: [

{ fields: ['email'] },

{ fields: ['status', 'createdAt'] }

]

});#Query Optimization with EXPLAIN

Understanding how your database uses indexes requires EXPLAIN analysis.

EXPLAIN ANALYZE

SELECT * FROM users WHERE email = 'user@example.com';

-- Output:

-- Seq Scan on users (cost=0.00..35.50 rows=1 width=100)

-- Filter: (email = 'user@example.com')

-- Planning Time: 0.123 ms

-- Execution Time: 2.456 ms

-- After creating index:

-- Index Scan using idx_users_email on users (cost=0.29..8.30 rows=1 width=100)

-- Index Cond: (email = 'user@example.com')

-- Planning Time: 0.089 ms

-- Execution Time: 0.234 msEXPLAIN

SELECT * FROM users WHERE email = 'user@example.com';

-- Output:

-- id | select_type | table | type | possible_keys | key | rows | Extra

-- 1 | SIMPLE | users | const | idx_email | idx_email | 1 | NULLKey metrics:

- Seq Scan - Full table scan (bad)

- Index Scan - Using an index (good)

- rows - Estimated rows examined

- cost - Relative query cost

#Common Mistakes and Pitfalls

#Over-Indexing

Creating too many indexes slows down writes without helping reads.

CREATE INDEX idx_users_email ON users(email);

CREATE INDEX idx_users_name ON users(name);

CREATE INDEX idx_users_phone ON users(phone);

CREATE INDEX idx_users_address ON users(address);

CREATE INDEX idx_users_city ON users(city);

-- Every INSERT now updates 5 indexes!Solution: Index only columns used in WHERE, JOIN, or ORDER BY clauses.

#Indexing High-Cardinality Columns Poorly

CREATE INDEX idx_users_status ON users(status);

-- If 90% of users are 'active', this index is uselessSolution: Use partial indexes for low-selectivity columns:

CREATE INDEX idx_inactive_users ON users(id) WHERE status != 'active';#Ignoring Column Order in Composite Indexes

CREATE INDEX idx_orders ON orders(created_at, user_id);

-- This query doesn't use the index efficiently

SELECT * FROM orders WHERE user_id = 123;Solution: Put frequently filtered columns first:

CREATE INDEX idx_orders ON orders(user_id, created_at);#Not Maintaining Indexes

Indexes fragment over time, degrading performance.

REINDEX INDEX idx_users_email;

-- Or rebuild all indexes on a table

REINDEX TABLE users;OPTIMIZE TABLE users;

-- Or

ANALYZE TABLE users;#Using Indexes for Columns in Functions

CREATE INDEX idx_users_email ON users(email);

-- This query doesn't use the index

SELECT * FROM users WHERE LOWER(email) = 'user@example.com';Solution: Create an expression index:

CREATE INDEX idx_users_email_lower ON users(LOWER(email));

SELECT * FROM users WHERE LOWER(email) = 'user@example.com';#Best Practices for Production

#1. Index Based on Query Patterns

Analyze your actual queries before indexing:

-- Enable query logging

ALTER SYSTEM SET log_min_duration_statement = 1000; -- Log queries > 1s

SELECT pg_reload_conf();

-- Check logs

SELECT query, mean_exec_time FROM pg_stat_statements

ORDER BY mean_exec_time DESC LIMIT 10;#2. Use Composite Indexes Strategically

-- Common query pattern

SELECT * FROM orders

WHERE user_id = ? AND status = ? AND created_at > ?;

-- Create composite index matching query pattern

CREATE INDEX idx_orders_lookup ON orders(user_id, status, created_at);#3. Monitor Index Usage

SELECT schemaname, tablename, indexname, idx_scan

FROM pg_stat_user_indexes

WHERE idx_scan = 0

ORDER BY pg_relation_size(indexrelid) DESC;SELECT object_schema, object_name, count_read, count_write

FROM performance_schema.table_io_waits_summary_by_index_usage

WHERE count_read = 0

ORDER BY count_write DESC;#4. Plan for Growth

-- PostgreSQL

SELECT pg_size_pretty(pg_relation_size('idx_users_email'));

-- MySQL

SELECT

ROUND(stat_value * @@innodb_page_size / 1024 / 1024, 2) AS size_mb

FROM mysql.innodb_index_stats

WHERE stat_name = 'size' AND index_name = 'idx_users_email';#5. Test Index Changes

Always test indexes in staging before production:

-- Non-blocking index creation

CREATE INDEX CONCURRENTLY idx_users_email ON users(email);-- Use ALGORITHM=INPLACE to avoid table lock

ALTER TABLE users ADD INDEX idx_email (email), ALGORITHM=INPLACE, LOCK=NONE;#When NOT to Use Indexes

#Small Tables

-- Table with < 1000 rows

-- Full scan is often faster than index lookup

SELECT * FROM countries WHERE name = 'United States';#High Write-to-Read Ratio

-- If you INSERT/UPDATE/DELETE more than you SELECT

-- Index maintenance overhead outweighs benefits

-- Consider denormalization or caching instead#Columns with NULL Values

-- Most databases don't index NULL values efficiently

-- Avoid indexing columns with many NULLs

CREATE INDEX idx_users_phone ON users(phone)

WHERE phone IS NOT NULL;#Temporary or Staging Tables

-- Temporary tables used for intermediate processing

-- Index overhead not worth the short lifespan

CREATE TEMPORARY TABLE staging_users AS

SELECT * FROM users WHERE status = 'pending';#Conclusion

Database indexing is both art and science. The fundamentals are straightforward—indexes accelerate lookups by organizing data—but mastering them requires understanding your access patterns, trade-offs, and the algorithms powering different index types.

Key takeaways:

- B-trees are the default for a reason: balanced, disk-friendly, and versatile

- Hash indexes are fast for equality but useless for ranges

- Full-text indexes unlock text search capabilities

- Composite indexes must match your query patterns

- Over-indexing hurts writes more than it helps reads

- Use EXPLAIN to verify indexes actually help

- Monitor and maintain indexes in production

Start by identifying your slowest queries with EXPLAIN ANALYZE. Create targeted indexes for those queries. Measure the impact. Remove indexes that don't help. This iterative approach beats guessing.

The best index is one that solves a real problem. Build with intention, measure results, and iterate.