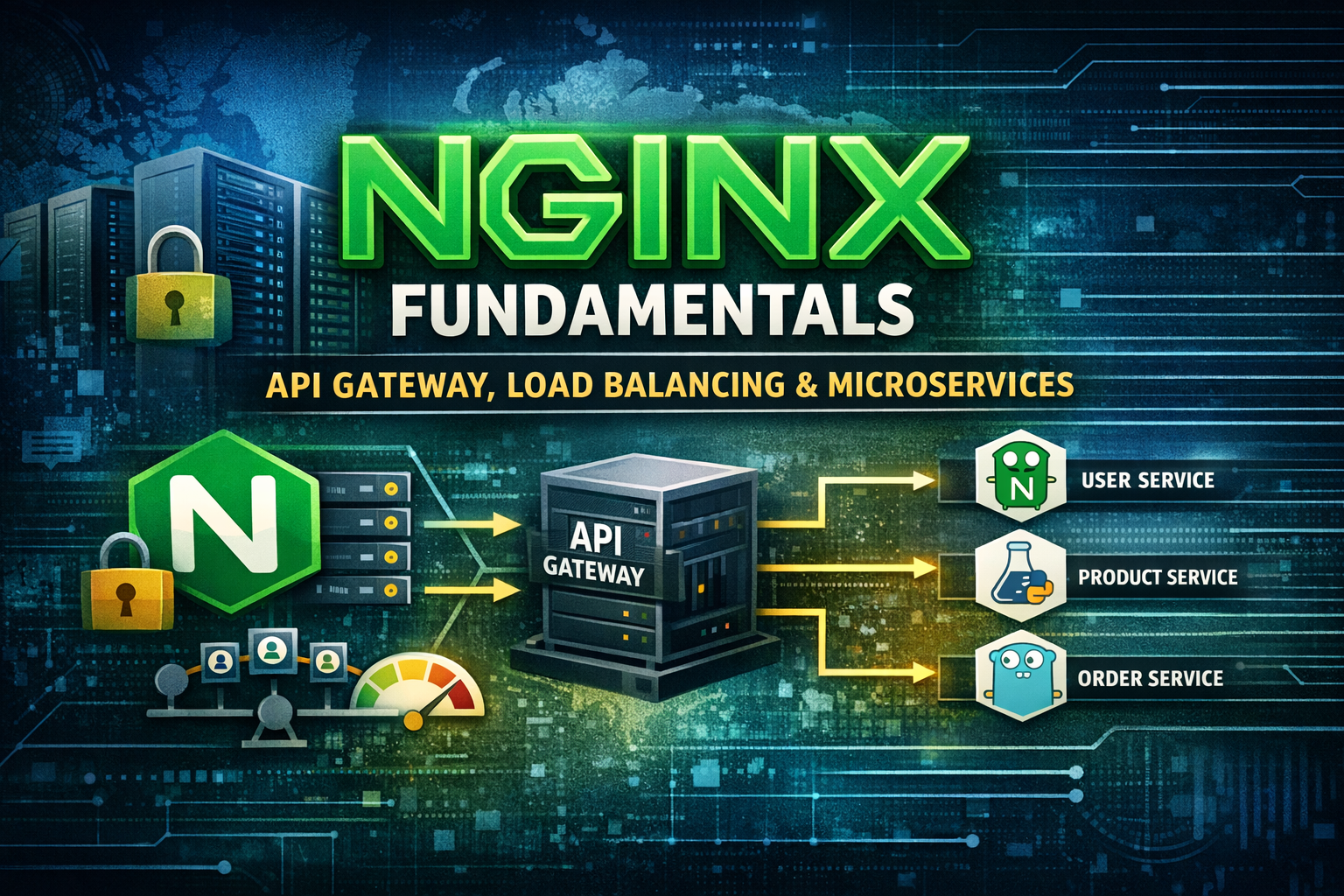

Nginx Fundamentals - From Web Server to API Gateway, Load Balancing, Rate Limiting, and Building Production Microservices

Master Nginx from core concepts to production. Learn web serving, reverse proxying, load balancing, rate limiting, authentication, and API gateway capabilities. Build a complete microservice architecture with multiple tech stacks and implement Nginx as a central gateway with best practices.

#Introduction

Web traffic is unpredictable. One moment you have normal load, the next moment thousands of users hit your application simultaneously. Without proper infrastructure, your servers crash, users get errors, and your business suffers.

Nginx is a high-performance web server and reverse proxy that handles millions of concurrent connections efficiently. Used by companies like Netflix, Airbnb, and Uber, Nginx is far more than just a web server—it's a complete solution for load balancing, API gateway functionality, rate limiting, authentication, and microservice orchestration.

In this article, we'll explore Nginx's architecture, understand its capabilities beyond basic web serving, and build a production-ready microservice architecture with Nginx as the central API gateway.

#Why Nginx Exists

#The Web Server Problem

Traditional web servers had significant limitations:

Single-threaded: Apache used one process per connection, limiting concurrency.

High Memory Usage: Each connection consumed significant resources.

Slow Performance: Couldn't handle thousands of concurrent connections efficiently.

Limited Flexibility: Difficult to implement advanced features like rate limiting or authentication.

Monolithic Design: Hard to extend without recompiling.

Difficult Scaling: Required complex load balancing setups.

#The Nginx Solution

Nginx was built to solve these problems:

Event-driven Architecture: Handles thousands of connections with minimal resources.

Asynchronous Processing: Non-blocking I/O for high performance.

Lightweight: Minimal memory footprint.

Highly Configurable: Powerful configuration language without recompilation.

Modular Design: Easy to extend with modules.

Reverse Proxy: Perfect for microservices and API gateways.

Load Balancing: Built-in algorithms for distributing traffic.

#Nginx Core Architecture

#Key Concepts

Master Process: Manages worker processes and configuration.

Worker Processes: Handle actual client connections.

Connection Pool: Efficient connection management.

Event Loop: Non-blocking event processing.

Upstream: Backend servers that Nginx proxies to.

Location Block: URL pattern matching and routing.

Server Block: Virtual host configuration.

Module: Extensible functionality.

#How Nginx Works

Client Request → Master Process → Worker Process → Event Loop → Upstream Server → Response- Client connects to Nginx

- Master process assigns to worker

- Worker processes request asynchronously

- Routes to upstream server

- Response sent back to client

- Connection kept alive or closed

#Nginx Architecture

Nginx Master Process

↓

Worker Process 1 ← Event Loop → Upstream Servers

Worker Process 2 ← Event Loop → Upstream Servers

Worker Process 3 ← Event Loop → Upstream Servers

Worker Process N ← Event Loop → Upstream ServersEach worker handles thousands of connections simultaneously using event-driven architecture.

#Nginx Core Concepts & Features

#1. Basic Web Server Configuration

Serve static files and basic HTTP.

server {

listen 80;

server_name example.com;

root /var/www/html;

index index.html index.htm;

location / {

try_files $uri $uri/ =404;

}

location ~* \.(jpg|jpeg|png|gif|ico|css|js)$ {

expires 30d;

add_header Cache-Control "public, immutable";

}

error_page 404 /404.html;

error_page 500 502 503 504 /50x.html;

}Use Cases:

- Static File Serving: HTML, CSS, JavaScript

- Caching: Browser and proxy caching

- Compression: Gzip compression

- SSL/TLS: HTTPS support

#2. Reverse Proxy and Load Balancing

Route requests to backend servers.

upstream backend {

# Round-robin (default)

server backend1.example.com:8080;

server backend2.example.com:8080;

server backend3.example.com:8080;

# Least connections

# least_conn;

# IP hash (sticky sessions)

# ip_hash;

# Weighted round-robin

# server backend1.example.com:8080 weight=5;

# server backend2.example.com:8080 weight=3;

# server backend3.example.com:8080 weight=1;

}

server {

listen 80;

server_name api.example.com;

location / {

proxy_pass http://backend;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}

}Load Balancing Algorithms:

- Round-robin: Distribute equally

- Least connections: Send to least busy

- IP hash: Sticky sessions

- Weighted: Custom distribution

- Random: Random selection

Use Cases:

- Microservices: Route to multiple services

- High Availability: Failover to backup servers

- Scaling: Distribute load across servers

- Session Persistence: Keep user on same server

#3. Rate Limiting

Control request rate per client.

# Define rate limit zones

limit_req_zone $binary_remote_addr zone=api_limit:10m rate=10r/s;

limit_req_zone $binary_remote_addr zone=login_limit:10m rate=5r/m;

server {

listen 80;

server_name api.example.com;

# Apply rate limit to API

location /api/ {

limit_req zone=api_limit burst=20 nodelay;

proxy_pass http://backend;

}

# Stricter limit for login

location /login {

limit_req zone=login_limit burst=5 nodelay;

proxy_pass http://backend;

}

# No limit for static files

location ~* \.(jpg|jpeg|png|gif|css|js)$ {

proxy_pass http://backend;

}

}Rate Limiting Options:

- rate: Requests per second/minute

- burst: Allow temporary spike

- nodelay: Reject immediately vs queue

- zone: Named limit zone

Use Cases:

- API Protection: Prevent abuse

- DDoS Mitigation: Limit attack impact

- Fair Usage: Prevent single user hogging

- Login Protection: Prevent brute force

#4. Authentication and Authorization

Control access to resources.

# Basic authentication

location /admin {

auth_basic "Admin Area";

auth_basic_user_file /etc/nginx/.htpasswd;

proxy_pass http://backend;

}

# JWT authentication (requires module)

location /api/protected {

auth_jwt "";

auth_jwt_key_file /etc/nginx/jwt_key.json;

proxy_pass http://backend;

}

# Custom authentication via subrequest

location /api/ {

auth_request /auth;

proxy_pass http://backend;

}

location = /auth {

internal;

proxy_pass http://auth_service;

proxy_pass_request_body off;

proxy_set_header Content-Length "";

}Authentication Methods:

- Basic Auth: Username/password

- JWT: Token-based

- OAuth: Third-party auth

- Custom: Via subrequest

Use Cases:

- Admin Panels: Restrict access

- API Security: Protect endpoints

- User Verification: Validate tokens

- Authorization: Role-based access

#5. URL Rewriting and Routing

Manipulate URLs and route requests.

server {

listen 80;

server_name example.com;

# Redirect HTTP to HTTPS

if ($scheme != "https") {

return 301 https://$server_name$request_uri;

}

# Rewrite URLs

rewrite ^/old-page$ /new-page permanent;

rewrite ^/blog/(.*)$ /articles/$1 last;

# Route based on URL pattern

location ~ ^/api/v1/ {

proxy_pass http://api_v1;

}

location ~ ^/api/v2/ {

proxy_pass http://api_v2;

}

# Route based on file extension

location ~ \.php$ {

proxy_pass http://php_backend;

}

# Route based on request method

location /upload {

limit_except GET HEAD {

auth_basic "Upload Area";

}

proxy_pass http://backend;

}

}Routing Patterns:

- Exact match:

location = /path - Prefix match:

location /path - Regex match:

location ~ /path - Case-insensitive:

location ~* /path

Use Cases:

- URL Rewriting: SEO-friendly URLs

- API Versioning: Route to different versions

- Microservice Routing: Route to different services

- Legacy Support: Redirect old URLs

#6. Caching and Performance

Cache responses for better performance.

# Define cache zones

proxy_cache_path /var/cache/nginx levels=1:2 keys_zone=api_cache:10m max_size=1g inactive=60m;

server {

listen 80;

server_name api.example.com;

# Cache GET requests

location /api/products {

proxy_cache api_cache;

proxy_cache_valid 200 10m;

proxy_cache_valid 404 1m;

proxy_cache_key "$scheme$request_method$host$request_uri";

proxy_cache_use_stale error timeout updating http_500 http_502 http_503 http_504;

add_header X-Cache-Status $upstream_cache_status;

proxy_pass http://backend;

}

# Don't cache POST requests

location /api/orders {

proxy_pass http://backend;

}

}Cache Options:

- proxy_cache_valid: Cache duration by status

- proxy_cache_key: Cache key generation

- proxy_cache_use_stale: Use stale cache on error

- add_header: Show cache status

Use Cases:

- API Caching: Cache API responses

- Static Content: Cache files

- Database Queries: Cache expensive queries

- Performance: Reduce backend load

#7. SSL/TLS and HTTPS

Secure connections with encryption.

server {

listen 443 ssl http2;

server_name example.com;

# SSL certificates

ssl_certificate /etc/nginx/ssl/certificate.crt;

ssl_certificate_key /etc/nginx/ssl/private.key;

# SSL protocols and ciphers

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers HIGH:!aNULL:!MD5;

ssl_prefer_server_ciphers on;

# SSL session caching

ssl_session_cache shared:SSL:10m;

ssl_session_timeout 10m;

# HSTS (HTTP Strict Transport Security)

add_header Strict-Transport-Security "max-age=31536000; includeSubDomains" always;

location / {

proxy_pass http://backend;

}

}

# Redirect HTTP to HTTPS

server {

listen 80;

server_name example.com;

return 301 https://$server_name$request_uri;

}SSL/TLS Features:

- Certificates: SSL/TLS certificates

- Protocols: TLS version support

- Ciphers: Encryption algorithms

- HSTS: Force HTTPS

- Session Caching: Performance optimization

Use Cases:

- Security: Encrypt traffic

- Compliance: Meet security standards

- SEO: HTTPS ranking boost

- Trust: Show security badge

#8. Compression and Optimization

Reduce response size for faster delivery.

server {

listen 80;

server_name example.com;

# Enable gzip compression

gzip on;

gzip_vary on;

gzip_min_length 1000;

gzip_types text/plain text/css text/xml text/javascript

application/x-javascript application/xml+rss

application/json application/javascript;

gzip_disable "msie6";

# Compression level (1-9)

gzip_comp_level 6;

# Buffer settings

gzip_buffers 16 8k;

location / {

proxy_pass http://backend;

}

}Compression Options:

- gzip_types: Content types to compress

- gzip_comp_level: Compression level

- gzip_min_length: Minimum size to compress

- gzip_disable: Disable for specific clients

Use Cases:

- Bandwidth Reduction: Smaller responses

- Faster Loading: Quicker delivery

- Mobile Optimization: Reduce data usage

- Cost Savings: Lower bandwidth costs

#9. Logging and Monitoring

Track requests and performance.

# Custom log format

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

log_format detailed '$remote_addr - $remote_user [$time_local] '

'"$request" $status $body_bytes_sent '

'"$http_referer" "$http_user_agent" '

'rt=$request_time uct="$upstream_connect_time" '

'uht="$upstream_header_time" urt="$upstream_response_time"';

server {

listen 80;

server_name example.com;

# Access logs

access_log /var/log/nginx/access.log main;

access_log /var/log/nginx/detailed.log detailed;

# Error logs

error_log /var/log/nginx/error.log warn;

location / {

proxy_pass http://backend;

}

}Log Variables:

- $remote_addr: Client IP

- $request: HTTP request

- $status: HTTP status code

- $body_bytes_sent: Response size

- $request_time: Total request time

- $upstream_response_time: Backend response time

Use Cases:

- Debugging: Troubleshoot issues

- Monitoring: Track performance

- Analytics: Analyze traffic

- Security: Detect attacks

#10. API Gateway Features

Advanced API gateway capabilities.

# API versioning

upstream api_v1 {

server api1.example.com:8080;

}

upstream api_v2 {

server api2.example.com:8080;

}

# Request/response modification

server {

listen 80;

server_name api.example.com;

# Add API key validation

location /api/ {

if ($http_x_api_key = "") {

return 401 "API key required";

}

# Route based on version

if ($uri ~ ^/api/v1/) {

proxy_pass http://api_v1;

}

if ($uri ~ ^/api/v2/) {

proxy_pass http://api_v2;

}

# Add request headers

proxy_set_header X-API-Key $http_x_api_key;

proxy_set_header X-Request-ID $request_id;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

# Health check endpoint

location /health {

access_log off;

return 200 "healthy\n";

add_header Content-Type text/plain;

}

}API Gateway Features:

- API Versioning: Route to different versions

- Request Validation: Check headers/parameters

- Rate Limiting: Prevent abuse

- Authentication: Validate API keys

- Request/Response Modification: Add/remove headers

- Health Checks: Monitor backend health

- Circuit Breaking: Handle failures gracefully

#Building a Production-Ready Microservice Architecture with Nginx

Now let's build a complete microservice architecture with Nginx as the API gateway. The system includes:

- User Service (Node.js/Express)

- Product Service (Python/FastAPI)

- Order Service (Go/Gin)

- Payment Service (Java/Spring Boot)

- Nginx API Gateway with rate limiting, authentication, and routing

#Project Structure

microservices/

├── nginx/

│ ├── nginx.conf

│ ├── conf.d/

│ │ ├── api-gateway.conf

│ │ ├── rate-limiting.conf

│ │ └── upstream.conf

│ └── ssl/

│ ├── certificate.crt

│ └── private.key

├── user-service/

│ ├── package.json

│ ├── server.js

│ └── Dockerfile

├── product-service/

│ ├── requirements.txt

│ ├── main.py

│ └── Dockerfile

├── order-service/

│ ├── go.mod

│ ├── main.go

│ └── Dockerfile

├── payment-service/

│ ├── pom.xml

│ ├── src/

│ └── Dockerfile

├── docker-compose.yml

└── README.md#Step 1: Nginx Configuration

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 10000;

use epoll;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 65;

types_hash_max_size 2048;

client_max_body_size 20M;

# Gzip compression

gzip on;

gzip_vary on;

gzip_min_length 1000;

gzip_types text/plain text/css text/xml text/javascript

application/x-javascript application/xml+rss

application/json application/javascript;

include /etc/nginx/conf.d/*.conf;

}# User Service

upstream user_service {

server user-service:3001;

}

# Product Service

upstream product_service {

server product-service:8000;

}

# Order Service

upstream order_service {

server order-service:8080;

}

# Payment Service

upstream payment_service {

server payment-service:8081;

}

# Auth Service

upstream auth_service {

server user-service:3001;

}# Rate limiting zones

limit_req_zone $binary_remote_addr zone=api_limit:10m rate=100r/s;

limit_req_zone $binary_remote_addr zone=auth_limit:10m rate=10r/m;

limit_req_zone $binary_remote_addr zone=payment_limit:10m rate=5r/s;

# Connection limiting

limit_conn_zone $binary_remote_addr zone=addr:10m;

limit_conn addr 100;server {

listen 80;

server_name api.example.com;

# Redirect to HTTPS

return 301 https://$server_name$request_uri;

}

server {

listen 443 ssl http2;

server_name api.example.com;

# SSL configuration

ssl_certificate /etc/nginx/ssl/certificate.crt;

ssl_certificate_key /etc/nginx/ssl/private.key;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers HIGH:!aNULL:!MD5;

ssl_prefer_server_ciphers on;

ssl_session_cache shared:SSL:10m;

ssl_session_timeout 10m;

# Security headers

add_header Strict-Transport-Security "max-age=31536000; includeSubDomains" always;

add_header X-Content-Type-Options "nosniff" always;

add_header X-Frame-Options "DENY" always;

add_header X-XSS-Protection "1; mode=block" always;

# Health check endpoint

location /health {

access_log off;

return 200 "Gateway OK\n";

add_header Content-Type text/plain;

}

# User Service Routes

location ~ ^/api/v1/users {

limit_req zone=api_limit burst=20 nodelay;

# Authentication check

auth_request /auth;

auth_request_set $auth_status $upstream_status;

proxy_pass http://user_service;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_set_header X-Request-ID $request_id;

}

# Authentication endpoint (no auth required)

location ~ ^/api/v1/auth {

limit_req zone=auth_limit burst=5 nodelay;

proxy_pass http://auth_service;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

# Product Service Routes

location ~ ^/api/v1/products {

limit_req zone=api_limit burst=20 nodelay;

# Cache GET requests

proxy_cache_path /var/cache/nginx levels=1:2 keys_zone=product_cache:10m max_size=100m;

proxy_cache product_cache;

proxy_cache_valid 200 10m;

proxy_cache_key "$scheme$request_method$host$request_uri";

# Only cache GET requests

proxy_cache_methods GET HEAD;

add_header X-Cache-Status $upstream_cache_status;

proxy_pass http://product_service;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}

# Order Service Routes

location ~ ^/api/v1/orders {

limit_req zone=api_limit burst=20 nodelay;

# Authentication required

auth_request /auth;

proxy_pass http://order_service;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_set_header X-Request-ID $request_id;

}

# Payment Service Routes

location ~ ^/api/v1/payments {

limit_req zone=payment_limit burst=5 nodelay;

# Authentication required

auth_request /auth;

proxy_pass http://payment_service;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_set_header X-Request-ID $request_id;

}

# Authentication subrequest

location = /auth {

internal;

proxy_pass http://auth_service/api/v1/auth/verify;

proxy_pass_request_body off;

proxy_set_header Content-Length "";

proxy_set_header X-Original-URI $request_uri;

proxy_set_header Authorization $http_authorization;

}

# Catch-all 404

location / {

return 404 '{"error": "Not Found"}';

add_header Content-Type application/json;

}

}#Step 2: User Service (Node.js/Express)

const express = require('express');

const jwt = require('jsonwebtoken');

const app = express();

app.use(express.json());

const JWT_SECRET = process.env.JWT_SECRET || 'secret-key';

// Mock user database

const users = [

{ id: 1, email: 'user@example.com', password: 'password123' }

];

// Login endpoint

app.post('/api/v1/auth/login', (req, res) => {

const { email, password } = req.body;

const user = users.find(u => u.email === email && u.password === password);

if (!user) {

return res.status(401).json({ error: 'Invalid credentials' });

}

const token = jwt.sign({ id: user.id, email: user.email }, JWT_SECRET, {

expiresIn: '24h'

});

res.json({ token, user: { id: user.id, email: user.email } });

});

// Verify token endpoint

app.get('/api/v1/auth/verify', (req, res) => {

const token = req.headers.authorization?.split(' ')[1];

if (!token) {

return res.status(401).json({ error: 'No token' });

}

try {

const decoded = jwt.verify(token, JWT_SECRET);

res.json({ valid: true, user: decoded });

} catch (error) {

res.status(401).json({ error: 'Invalid token' });

}

});

// Get users endpoint

app.get('/api/v1/users', (req, res) => {

res.json({ users });

});

// Get user by ID

app.get('/api/v1/users/:id', (req, res) => {

const user = users.find(u => u.id === parseInt(req.params.id));

if (!user) {

return res.status(404).json({ error: 'User not found' });

}

res.json(user);

});

app.listen(3001, () => {

console.log('User Service running on port 3001');

});#Step 3: Product Service (Python/FastAPI)

from fastapi import FastAPI, HTTPException

from pydantic import BaseModel

from typing import List

app = FastAPI()

class Product(BaseModel):

id: int

name: str

price: float

stock: int

# Mock product database

products = [

Product(id=1, name="Laptop", price=999.99, stock=10),

Product(id=2, name="Mouse", price=29.99, stock=50),

Product(id=3, name="Keyboard", price=79.99, stock=30),

]

@app.get("/api/v1/products", response_model=List[Product])

async def get_products(skip: int = 0, limit: int = 10):

return products[skip:skip + limit]

@app.get("/api/v1/products/{product_id}", response_model=Product)

async def get_product(product_id: int):

product = next((p for p in products if p.id == product_id), None)

if not product:

raise HTTPException(status_code=404, detail="Product not found")

return product

@app.post("/api/v1/products", response_model=Product)

async def create_product(product: Product):

products.append(product)

return product

@app.get("/health")

async def health():

return {"status": "ok"}

if __name__ == "__main__":

import uvicorn

uvicorn.run(app, host="0.0.0.0", port=8000)#Step 4: Order Service (Go/Gin)

package main

import (

"github.com/gin-gonic/gin"

"net/http"

)

type Order struct {

ID int `json:"id"`

UserID int `json:"user_id"`

ProductID int `json:"product_id"`

Quantity int `json:"quantity"`

Total float64 `json:"total"`

}

var orders []Order

func main() {

router := gin.Default()

// Get all orders

router.GET("/api/v1/orders", func(c *gin.Context) {

c.JSON(http.StatusOK, orders)

})

// Get order by ID

router.GET("/api/v1/orders/:id", func(c *gin.Context) {

id := c.Param("id")

for _, order := range orders {

if order.ID == 1 {

c.JSON(http.StatusOK, order)

return

}

}

c.JSON(http.StatusNotFound, gin.H{"error": "Order not found"})

})

// Create order

router.POST("/api/v1/orders", func(c *gin.Context) {

var order Order

if err := c.ShouldBindJSON(&order); err != nil {

c.JSON(http.StatusBadRequest, gin.H{"error": err.Error()})

return

}

order.ID = len(orders) + 1

orders = append(orders, order)

c.JSON(http.StatusCreated, order)

})

// Health check

router.GET("/health", func(c *gin.Context) {

c.JSON(http.StatusOK, gin.H{"status": "ok"})

})

router.Run(":8080")

}#Step 5: Docker Compose Setup

version: '3.8'

services:

nginx:

image: nginx:latest

ports:

- "80:80"

- "443:443"

volumes:

- ./nginx/nginx.conf:/etc/nginx/nginx.conf:ro

- ./nginx/conf.d:/etc/nginx/conf.d:ro

- ./nginx/ssl:/etc/nginx/ssl:ro

depends_on:

- user-service

- product-service

- order-service

- payment-service

networks:

- microservices

user-service:

build: ./user-service

ports:

- "3001:3001"

environment:

- JWT_SECRET=your-secret-key

networks:

- microservices

product-service:

build: ./product-service

ports:

- "8000:8000"

networks:

- microservices

order-service:

build: ./order-service

ports:

- "8080:8080"

networks:

- microservices

payment-service:

image: openjdk:11

ports:

- "8081:8081"

networks:

- microservices

networks:

microservices:

driver: bridge#Step 6: Running the System

# Build and start all services

docker-compose up -d

# Check service health

curl http://localhost/health

# Login to get token

curl -X POST http://localhost/api/v1/auth/login \

-H "Content-Type: application/json" \

-d '{"email":"user@example.com","password":"password123"}'

# Get products (cached)

curl http://localhost/api/v1/products

# Create order (requires auth)

curl -X POST http://localhost/api/v1/orders \

-H "Authorization: Bearer YOUR_TOKEN" \

-H "Content-Type: application/json" \

-d '{"user_id":1,"product_id":1,"quantity":2,"total":1999.98}'

# View Nginx logs

docker-compose logs -f nginx#Common Mistakes & Pitfalls

#1. Not Setting Proxy Headers

# ❌ Wrong - loses client information

location / {

proxy_pass http://backend;

}

# ✅ Correct - preserves client info

location / {

proxy_pass http://backend;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}#2. Inefficient Caching

# ❌ Wrong - caches everything including POST

proxy_cache_path /var/cache/nginx levels=1:2 keys_zone=cache:10m;

location / {

proxy_cache cache;

proxy_pass http://backend;

}

# ✅ Correct - only cache GET requests

location / {

proxy_cache_methods GET HEAD;

proxy_cache cache;

proxy_cache_valid 200 10m;

proxy_pass http://backend;

}#3. Missing Error Handling

# ❌ Wrong - no fallback on error

location / {

proxy_pass http://backend;

}

# ✅ Correct - handle errors gracefully

location / {

proxy_pass http://backend;

proxy_intercept_errors on;

error_page 502 503 504 /50x.html;

}#4. Loose Rate Limiting

# ❌ Wrong - too permissive

limit_req_zone $binary_remote_addr zone=limit:10m rate=1000r/s;

# ✅ Correct - appropriate limits

limit_req_zone $binary_remote_addr zone=api_limit:10m rate=100r/s;

limit_req_zone $binary_remote_addr zone=auth_limit:10m rate=10r/m;#5. Not Using Upstream Health Checks

# ❌ Wrong - no health checks

upstream backend {

server backend1:8080;

server backend2:8080;

}

# ✅ Correct - with health checks

upstream backend {

server backend1:8080 max_fails=3 fail_timeout=30s;

server backend2:8080 max_fails=3 fail_timeout=30s;

}#Best Practices

#1. Use Specific Upstream Servers

# ✅ Good - specific servers

upstream backend {

server backend1.internal:8080;

server backend2.internal:8080;

server backend3.internal:8080;

}#2. Implement Proper Logging

# ✅ Good - detailed logging

log_format detailed '$remote_addr - $remote_user [$time_local] '

'"$request" $status $body_bytes_sent '

'rt=$request_time uct="$upstream_connect_time" '

'uht="$upstream_header_time" urt="$upstream_response_time"';

access_log /var/log/nginx/access.log detailed;#3. Use Connection Pooling

# ✅ Good - connection pooling

upstream backend {

server backend1:8080;

keepalive 32;

}

location / {

proxy_pass http://backend;

proxy_http_version 1.1;

proxy_set_header Connection "";

}#4. Monitor Performance

# ✅ Good - track performance metrics

add_header X-Response-Time $request_time;

add_header X-Upstream-Time $upstream_response_time;#5. Implement Circuit Breaking

# ✅ Good - circuit breaking

upstream backend {

server backend1:8080 max_fails=5 fail_timeout=30s;

server backend2:8080 max_fails=5 fail_timeout=30s;

}#6. Use Least Connections

# ✅ Good - least connections algorithm

upstream backend {

least_conn;

server backend1:8080;

server backend2:8080;

server backend3:8080;

}#7. Implement Request Timeouts

# ✅ Good - appropriate timeouts

proxy_connect_timeout 5s;

proxy_send_timeout 10s;

proxy_read_timeout 10s;#8. Use HTTP/2

# ✅ Good - HTTP/2 support

listen 443 ssl http2;#Conclusion

Nginx is far more than a web server—it's a complete solution for modern application infrastructure. Understanding its capabilities enables you to build scalable, reliable, and secure systems.

Key takeaways:

- Use Nginx as reverse proxy for microservices

- Implement rate limiting to prevent abuse

- Cache responses for better performance

- Use authentication for protected endpoints

- Monitor performance with detailed logging

- Implement health checks for reliability

- Use load balancing algorithms appropriately

- Secure with SSL/TLS and security headers

Next steps:

- Set up Nginx locally

- Configure basic reverse proxy

- Add rate limiting

- Implement caching

- Set up SSL/TLS

- Monitor performance

- Scale to production

Nginx makes building scalable infrastructure accessible. Master it, and you'll build systems that handle millions of requests reliably and efficiently.