Panduan Database Type - SQL, NoSQL, Cache, Vector, dan Search Engine

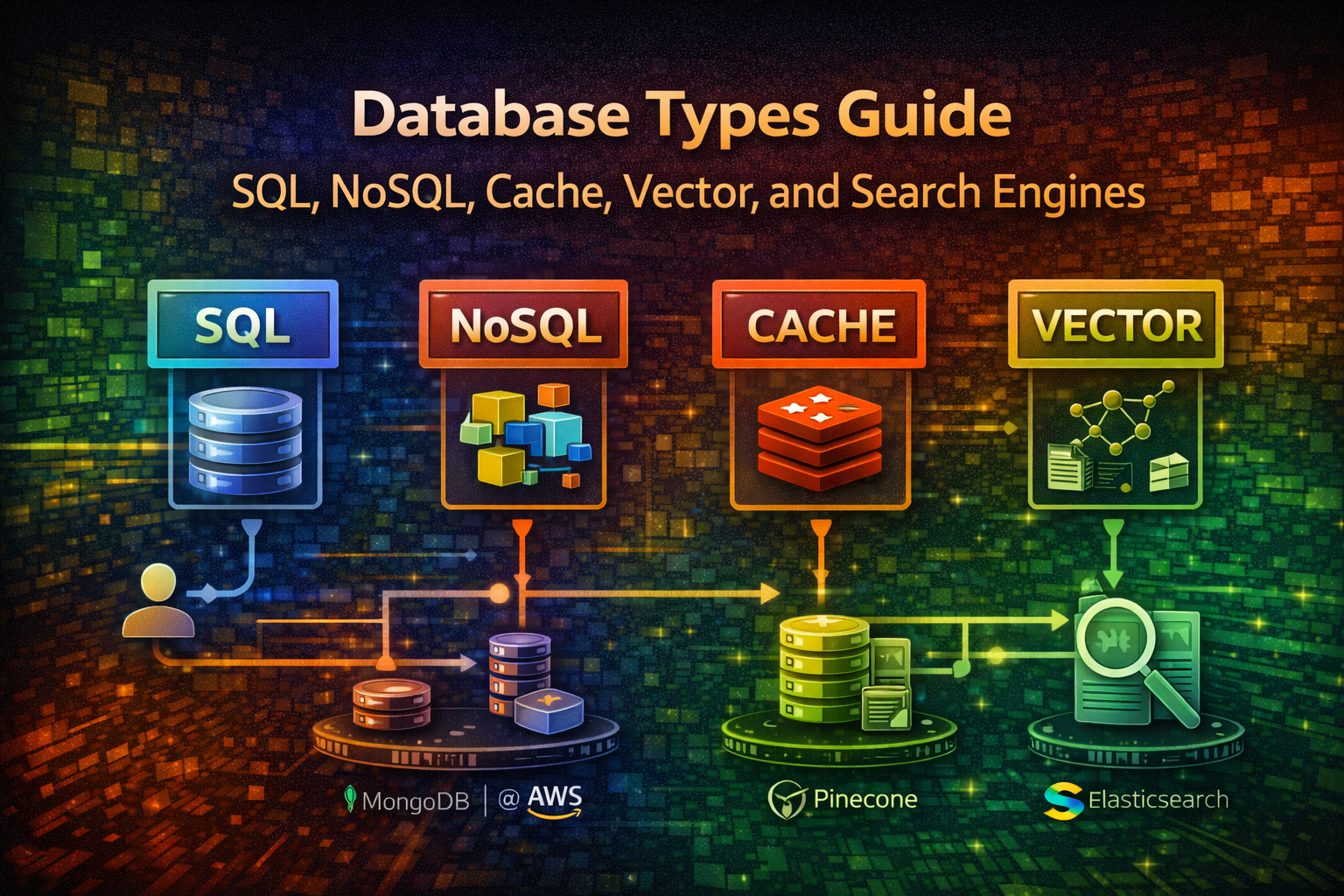

Kuasai polyglot persistence. Pelajari kapan menggunakan SQL, NoSQL, Redis, vector database, dan search engine dengan real-world example dan production code.

#Pengenalan

Memilih database yang tepat adalah salah satu keputusan architectural paling critical yang akan Anda buat. Pilihan yang salah leads ke performance bottleneck, scaling nightmare, dan expensive refactoring di kemudian hari.

Sebagian besar team mulai dengan single database dan bertanya-tanya mengapa sistem mereka struggle. Realitasnya: tidak ada single database yang excel di semuanya. Modern system menggunakan multiple database—pattern yang disebut polyglot persistence. Anda mungkin menggunakan PostgreSQL untuk transactional data, Redis untuk caching, Elasticsearch untuk full-text search, dan vector database untuk AI feature.

Panduan ini memotong kebisingan. Anda akan belajar apa yang setiap database type lakukan, kapan menggunakannya, dan cara benar-benar mengimplementasikannya dalam kode.

#Relational Database (SQL)

#Apa Itu

Relational database menyimpan data dalam structured table dengan predefined schema. Mereka enforce ACID property (Atomicity, Consistency, Isolation, Durability) dan menggunakan SQL untuk query.

Common example: PostgreSQL, MySQL, MariaDB, SQL Server

#Kapan Menggunakan

- Transactional system (banking, payment, order)

- Complex query dengan join di multiple table

- Data integrity adalah critical

- Anda perlu strong consistency guarantee

- Financial record, user account, inventory

#Real-World Contoh

Platform e-commerce perlu track order, customer, dan inventory. Ketika customer place order, Anda harus:

- Deduct inventory

- Create order record

- Update customer purchase history

Semua harus succeed atau semua harus fail. SQL database handle ini dengan transaction.

#Code Contoh

import { Pool } from 'pg';

const pool = new Pool({

connectionString: 'postgresql://user:password@localhost:5432/ecommerce'

});

async function createOrder(customerId: number, items: OrderItem[]) {

const client = await pool.connect();

try {

await client.query('BEGIN');

// Create order

const orderResult = await client.query(

'INSERT INTO orders (customer_id, total_amount, status) VALUES ($1, $2, $3) RETURNING id',

[customerId, calculateTotal(items), 'pending']

);

const orderId = orderResult.rows[0].id;

// Add order item

for (const item of items) {

await client.query(

'INSERT INTO order_items (order_id, product_id, quantity) VALUES ($1, $2, $3)',

[orderId, item.productId, item.quantity]

);

// Deduct inventory

await client.query(

'UPDATE products SET stock = stock - $1 WHERE id = $2',

[item.quantity, item.productId]

);

}

await client.query('COMMIT');

return orderId;

} catch (error) {

await client.query('ROLLBACK');

throw error;

} finally {

client.release();

}

}#Key Characteristic

| Aspect | Details |

|---|---|

| Consistency | Strong (ACID) |

| Scalability | Vertical (scale up) |

| Query Language | SQL |

| Schema | Fixed, predefined |

| Join | Native support |

| Transaction | Full ACID support |

#NoSQL Database

#Document Store (MongoDB, Firebase)

Menyimpan data sebagai JSON-like document. Tidak ada fixed schema—setiap document dapat memiliki field berbeda.

Kapan menggunakan:

- Flexible, evolving data structure

- Rapid prototyping

- Hierarchical data (user profile dengan nested preference)

- Content management system

import { MongoClient } from 'mongodb';

const client = new MongoClient('mongodb://localhost:27017');

const db = client.db('social_app');

const users = db.collection('users');

async function createUserProfile(userData: any) {

// Tidak ada schema enforcement—add any field

const result = await users.insertOne({

username: userData.username,

email: userData.email,

profile: {

bio: userData.bio,

avatar: userData.avatar,

preferences: {

theme: 'dark',

notifications: true,

language: 'en'

}

},

createdAt: new Date(),

tags: ['developer', 'open-source'] // Array field

});

return result.insertedId;

}

async function updateUserPreferences(userId: string, preferences: any) {

await users.updateOne(

{ _id: new ObjectId(userId) },

{ $set: { 'profile.preferences': preferences } }

);

}

async function findUsersByTag(tag: string) {

return users.find({ tags: tag }).toArray();

}#Key-Value Store (Redis, Memcached)

Ultra-fast in-memory database. Menyimpan simple key-value pair.

Kapan menggunakan:

- Session storage

- Real-time leaderboard

- Rate limiting

- Caching

- Pub/Sub messaging

import { createClient } from 'redis';

const redis = createClient({

host: 'localhost',

port: 6379

});

await redis.connect();

// Simple caching

async function getUserWithCache(userId: string) {

const cached = await redis.get(`user:${userId}`);

if (cached) return JSON.parse(cached);

const user = await fetchFromDatabase(userId);

await redis.setEx(`user:${userId}`, 3600, JSON.stringify(user)); // 1 hour TTL

return user;

}

// Leaderboard

async function updateLeaderboard(userId: string, score: number) {

await redis.zAdd('leaderboard', { score, member: userId });

}

async function getTopPlayers(limit: number = 10) {

return redis.zRangeByScore('leaderboard', 0, Infinity, {

REV: true,

LIMIT: { offset: 0, count: limit }

});

}

// Rate limiting

async function checkRateLimit(userId: string, limit: number = 100) {

const key = `rate:${userId}`;

const current = await redis.incr(key);

if (current === 1) {

await redis.expire(key, 60); // Reset setiap menit

}

return current <= limit;

}#Column-Family Store (Cassandra, HBase)

Dioptimalkan untuk time-series data dan massive scale. Menyimpan data dalam column daripada row.

Kapan menggunakan:

- Time-series metric (monitoring, analytics)

- Massive scale (miliaran row)

- High write throughput

- Distributed system

import { Client } from 'cassandra-driver';

const client = new Client({

contactPoints: ['127.0.0.1'],

localDataCenter: 'datacenter1',

keyspace: 'metrics'

});

await client.connect();

async function recordMetric(

serverId: string,

timestamp: Date,

cpuUsage: number,

memoryUsage: number

) {

const query = `

INSERT INTO server_metrics (server_id, timestamp, cpu_usage, memory_usage)

VALUES (?, ?, ?, ?)

`;

await client.execute(query, [serverId, timestamp, cpuUsage, memoryUsage], {

prepare: true

});

}

async function getMetricsRange(

serverId: string,

startTime: Date,

endTime: Date

) {

const query = `

SELECT * FROM server_metrics

WHERE server_id = ? AND timestamp >= ? AND timestamp <= ?

`;

const result = await client.execute(query, [serverId, startTime, endTime], {

prepare: true

});

return result.rows;

}#In-Memory Database

#Redis (Advanced)

Beyond caching, Redis handle complex data structure dan real-time operation.

import { createClient } from 'redis';

const redis = createClient();

await redis.connect();

// Publish event ke stream

async function logUserActivity(userId: string, action: string, metadata: any) {

await redis.xAdd(

'user_activity',

'*', // Auto-generate ID

{

userId,

action,

timestamp: Date.now().toString(),

metadata: JSON.stringify(metadata)

}

);

}

// Consumer group untuk processing

async function processActivityStream() {

await redis.xGroupCreate('user_activity', 'analytics_group', '$', {

MKSTREAM: true

});

while (true) {

const messages = await redis.xReadGroup(

{ key: 'user_activity', group: 'analytics_group', consumer: 'worker-1' },

{ count: 10, block: 1000 }

);

for (const message of messages || []) {

console.log('Processing:', message.message);

// Process event...

await redis.xAck('user_activity', 'analytics_group', message.id);

}

}

}

// Geospatial query

async function findNearbyUsers(latitude: number, longitude: number, radiusKm: number) {

return redis.geoRadius('user_locations', longitude, latitude, radiusKm, 'km');

}#Vector Database

#Apa Itu

Specialized database untuk menyimpan dan query embedding (vector representation dari data). Essential untuk AI/ML feature seperti semantic search dan recommendation.

Common example: Pinecone, Weaviate, Milvus, Qdrant, Chroma

#Kapan Menggunakan

- Semantic search (find similar document)

- AI-powered recommendation

- Similarity matching

- Anomaly detection

- Image/audio search

#Real-World Contoh

SaaS platform ingin recommend similar article ke user. Daripada keyword matching, Anda gunakan embedding untuk find semantically similar content.

import { Pinecone } from '@pinecone-database/pinecone';

import { OpenAIEmbeddings } from 'langchain/embeddings/openai';

const pinecone = new Pinecone({

apiKey: process.env.PINECONE_API_KEY

});

const embeddings = new OpenAIEmbeddings({

openAIApiKey: process.env.OPENAI_API_KEY

});

async function indexArticle(articleId: string, content: string) {

// Generate embedding dari content

const embedding = await embeddings.embedQuery(content);

// Store dalam vector database

const index = pinecone.Index('articles');

await index.upsert([

{

id: articleId,

values: embedding,

metadata: {

title: content.substring(0, 100),

type: 'article'

}

}

]);

}

async function findSimilarArticles(articleId: string, topK: number = 5) {

const index = pinecone.Index('articles');

// Query by ID untuk find similar article

const results = await index.query({

id: articleId,

topK,

includeMetadata: true

});

return results.matches.map(match => ({

id: match.id,

similarity: match.score,

title: match.metadata?.title

}));

}

async function semanticSearch(query: string) {

const queryEmbedding = await embeddings.embedQuery(query);

const index = pinecone.Index('articles');

const results = await index.query({

vector: queryEmbedding,

topK: 10,

includeMetadata: true

});

return results.matches;

}#Search Engine

#Apa Itu

Specialized database dioptimalkan untuk full-text search, analytics, dan complex filtering. Built pada inverted index untuk fast text query.

Common example: Elasticsearch, OpenSearch, Meilisearch, Typesense

#Kapan Menggunakan

- Full-text search (Google-like search)

- Log aggregation dan analysis

- Real-time analytics

- Complex filtering dan faceting

- Auto-complete dan suggestion

#Real-World Contoh

Platform e-commerce perlu fast product search dengan filter. User search untuk "blue running shoes" dan expect result dalam millisecond dengan facet untuk price, brand, size.

import { Client } from '@elastic/elasticsearch';

const client = new Client({ node: 'http://localhost:9200' });

// Index product

async function indexProduct(product: any) {

await client.index({

index: 'products',

id: product.id,

document: {

name: product.name,

description: product.description,

price: product.price,

brand: product.brand,

category: product.category,

tags: product.tags,

rating: product.rating,

inStock: product.stock > 0

}

});

}

// Full-text search dengan filter

async function searchProducts(query: string, filters: any = {}) {

const result = await client.search({

index: 'products',

body: {

query: {

bool: {

must: [

{

multi_match: {

query,

fields: ['name^2', 'description', 'tags'],

fuzziness: 'AUTO'

}

}

],

filter: [

{ term: { inStock: true } },

...(filters.brand ? [{ term: { brand: filters.brand } }] : []),

...(filters.minPrice ? [{ range: { price: { gte: filters.minPrice } } }] : []),

...(filters.maxPrice ? [{ range: { price: { lte: filters.maxPrice } } }] : [])

]

}

},

aggs: {

brands: { terms: { field: 'brand', size: 10 } },

priceRanges: {

range: {

field: 'price',

ranges: [

{ to: 50 },

{ from: 50, to: 100 },

{ from: 100, to: 200 },

{ from: 200 }

]

}

}

},

size: 20

}

});

return {

hits: result.hits.hits.map(hit => hit._source),

facets: result.aggregations,

total: result.hits.total.value

};

}

// Auto-complete

async function autocomplete(prefix: string) {

const result = await client.search({

index: 'products',

body: {

query: {

match_phrase_prefix: {

name: prefix

}

},

size: 10

}

});

return result.hits.hits.map(hit => hit._source.name);

}

// Aggregation untuk analytics

async function getProductAnalytics() {

const result = await client.search({

index: 'products',

body: {

size: 0,

aggs: {

avgPrice: { avg: { field: 'price' } },

avgRating: { avg: { field: 'rating' } },

topBrands: { terms: { field: 'brand', size: 5 } },

priceDistribution: {

histogram: { field: 'price', interval: 50 }

}

}

}

});

return result.aggregations;

}#Graph Database

#Apa Itu

Optimize untuk relationship antara data. Menyimpan node (entity) dan edge (relationship) dengan property.

Common example: Neo4j, ArangoDB, TigerGraph

#Kapan Menggunakan

- Social network (follower, connection)

- Recommendation engine

- Knowledge graph

- Fraud detection

- Network analysis

import neo4j from 'neo4j-driver';

const driver = neo4j.driver(

'bolt://localhost:7687',

neo4j.auth.basic('neo4j', 'password')

);

const session = driver.session();

// Create relationship

async function connectUsers(userId1: string, userId2: string) {

await session.run(

'MATCH (u1:User {id: $id1}), (u2:User {id: $id2}) CREATE (u1)-[:FOLLOWS]->(u2)',

{ id1: userId1, id2: userId2 }

);

}

// Find mutual connection

async function findMutualFriends(userId: string) {

const result = await session.run(

`MATCH (u:User {id: $userId})-[:FOLLOWS]->(friend)-[:FOLLOWS]->(mutual)

WHERE NOT (u)-[:FOLLOWS]->(mutual)

RETURN mutual.name, COUNT(*) as mutualCount

ORDER BY mutualCount DESC

LIMIT 10`,

{ userId }

);

return result.records.map(record => ({

name: record.get('mutual.name'),

mutualCount: record.get('mutualCount').toNumber()

}));

}

// Recommendation berdasarkan graph

async function recommendProducts(userId: string) {

const result = await session.run(

`MATCH (u:User {id: $userId})-[:PURCHASED]->(p:Product)

MATCH (p)-[:SIMILAR_TO]->(recommended:Product)

WHERE NOT (u)-[:PURCHASED]->(recommended)

RETURN recommended.name, COUNT(*) as score

ORDER BY score DESC

LIMIT 5`,

{ userId }

);

return result.records.map(record => record.get('recommended.name'));

}

await session.close();

await driver.close();#Time-Series Database

#Apa Itu

Dioptimalkan untuk menyimpan dan query time-stamped data point. Compress data efficiently dan support fast range query.

Common example: InfluxDB, TimescaleDB, Prometheus, QuestDB

#Kapan Menggunakan

- Monitoring dan observability

- Metric collection

- Stock price dan financial data

- IoT sensor data

- Application performance monitoring

import { InfluxDB, Point } from '@influxdata/influxdb-client';

const influxDB = new InfluxDB({

url: 'http://localhost:8086',

token: process.env.INFLUX_TOKEN,

org: 'myorg',

bucket: 'metrics'

});

const writeApi = influxDB.getWriteApi('myorg', 'metrics');

// Write metric

async function recordServerMetrics(serverId: string, metrics: any) {

const point = new Point('server_metrics')

.tag('server_id', serverId)

.tag('region', 'us-east-1')

.floatField('cpu_usage', metrics.cpu)

.floatField('memory_usage', metrics.memory)

.floatField('disk_usage', metrics.disk)

.intField('request_count', metrics.requests)

.timestamp(new Date());

writeApi.writePoint(point);

await writeApi.flush();

}

// Query metric

const queryApi = influxDB.getQueryApi('myorg');

async function getMetricsForPeriod(serverId: string, hours: number = 24) {

const query = `

from(bucket: "metrics")

|> range(start: -${hours}h)

|> filter(fn: (r) => r.server_id == "${serverId}")

|> filter(fn: (r) => r._measurement == "server_metrics")

`;

const results: any[] = [];

await queryApi.queryRows(query, {

next(row, tableMeta) {

results.push({

time: row.values[tableMeta.toObject().time],

field: row.values[tableMeta.toObject()._field],

value: row.values[tableMeta.toObject()._value]

});

},

error(error) {

console.error('Query error:', error);

},

complete() {

console.log('Query complete');

}

});

return results;

}#Polyglot Persistence dalam Aksi

Berikut adalah bagaimana real system menggabungkan multiple database:

import { Pool } from 'pg';

import { createClient } from 'redis';

import { Client as ElasticsearchClient } from '@elastic/elasticsearch';

import { Pinecone } from '@pinecone-database/pinecone';

// Initialize semua database

const postgres = new Pool({ connectionString: process.env.DATABASE_URL });

const redis = createClient({ url: process.env.REDIS_URL });

const elasticsearch = new ElasticsearchClient({ node: process.env.ELASTICSEARCH_URL });

const pinecone = new Pinecone({ apiKey: process.env.PINECONE_API_KEY });

await redis.connect();

// User create blog post

async function createBlogPost(userId: string, post: any) {

// 1. Store dalam PostgreSQL (source of truth)

const result = await postgres.query(

'INSERT INTO posts (user_id, title, content, created_at) VALUES ($1, $2, $3, NOW()) RETURNING id',

[userId, post.title, post.content]

);

const postId = result.rows[0].id;

// 2. Index dalam Elasticsearch (untuk full-text search)

await elasticsearch.index({

index: 'blog_posts',

id: postId,

document: {

title: post.title,

content: post.content,

userId,

createdAt: new Date()

}

});

// 3. Generate embedding dan store dalam vector DB (untuk recommendation)

const embedding = await generateEmbedding(post.content);

await pinecone.Index('blog_posts').upsert([{

id: postId,

values: embedding,

metadata: { userId, title: post.title }

}]);

// 4. Cache dalam Redis (untuk fast access)

await redis.setEx(`post:${postId}`, 3600, JSON.stringify({

id: postId,

title: post.title,

userId,

createdAt: new Date()

}));

return postId;

}

// User search post

async function searchPosts(query: string) {

// Gunakan Elasticsearch untuk full-text search

const results = await elasticsearch.search({

index: 'blog_posts',

body: {

query: {

multi_match: {

query,

fields: ['title^2', 'content']

}

}

}

});

return results.hits.hits.map(hit => hit._source);

}

// Get recommendation

async function getRecommendations(postId: string) {

// Gunakan vector DB untuk semantic similarity

const results = await pinecone.Index('blog_posts').query({

id: postId,

topK: 5,

includeMetadata: true

});

return results.matches;

}#Kesalahan & Jebakan Umum

#Menggunakan Satu Database untuk Semuanya

Masalah: Coba gunakan PostgreSQL untuk caching, search, dan real-time feature.

Mengapa terjadi: Simplicity bias. Satu database terlihat lebih mudah untuk manage.

Solusi: Accept polyglot persistence. Setiap tool memiliki job. PostgreSQL tidak cepat dalam full-text search—Elasticsearch adalah.

#Tidak Index Dengan Benar

Masalah: Query slow down saat data berkembang.

Mengapa terjadi: Developer lupa index ada atau tidak mengerti query plan.

Solusi: Gunakan EXPLAIN dalam SQL, monitor slow query, index frequently filtered column.

-- PostgreSQL contoh

EXPLAIN ANALYZE SELECT * FROM users WHERE email = 'user@example.com';

CREATE INDEX idx_users_email ON users(email);#Mengabaikan Consistency Requirement

Masalah: Gunakan eventual consistency (NoSQL) di mana strong consistency (SQL) diperlukan.

Mengapa terjadi: NoSQL terlihat lebih cepat, jadi developer default ke itu.

Solusi: Pahami consistency need Anda. Financial transaction perlu ACID. User preference dapat eventually consistent.

#Tidak Plan untuk Scale

Masalah: Database bekerja baik dengan 1M row, break pada 100M.

Mengapa terjadi: Tidak ada load testing atau capacity planning.

Solusi: Test dengan realistic data volume. Pahami database limit Anda. Plan sharding/partitioning early.

#Menyimpan Semuanya dalam Cache

Masalah: Cache miss menyebabkan cascading failure.

Mengapa terjadi: Developer treat cache sebagai primary storage.

Solusi: Cache adalah performance layer, bukan data store. Selalu memiliki source of truth.

#Best Practice

#1. Pilih Berdasarkan Access Pattern

Sebelum pick database, tanya:

- Bagaimana data akan di-query?

- Apa read/write ratio?

- Apakah saya perlu transaction?

- Berapa banyak data?

- Apa latency requirement?

#2. Gunakan Connection Pooling

Jangan create new connection untuk setiap query.

import { Pool } from 'pg';

const pool = new Pool({

max: 20, // Maximum connection

idleTimeoutMillis: 30000,

connectionTimeoutMillis: 2000,

});

// Reuse connection

const result = await pool.query('SELECT * FROM users WHERE id = $1', [userId]);#3. Monitor Query Performance

async function monitoredQuery(query: string, params: any[]) {

const start = Date.now();

try {

const result = await pool.query(query, params);

const duration = Date.now() - start;

if (duration > 1000) {

console.warn(`Slow query (${duration}ms):`, query);

}

return result;

} catch (error) {

console.error('Query failed:', error);

throw error;

}

}#4. Implementasikan Proper Caching Strategy

async function getUser(userId: string) {

// Try cache terlebih dahulu

const cached = await redis.get(`user:${userId}`);

if (cached) return JSON.parse(cached);

// Cache miss—fetch dari database

const user = await postgres.query(

'SELECT * FROM users WHERE id = $1',

[userId]

);

if (user.rows.length === 0) return null;

// Store dalam cache

await redis.setEx(`user:${userId}`, 3600, JSON.stringify(user.rows[0]));

return user.rows[0];

}#5. Gunakan Transaction untuk Multi-Step Operation

async function transferMoney(fromId: string, toId: string, amount: number) {

const client = await pool.connect();

try {

await client.query('BEGIN ISOLATION LEVEL SERIALIZABLE');

// Deduct dari source

await client.query(

'UPDATE accounts SET balance = balance - $1 WHERE id = $2',

[amount, fromId]

);

// Add ke destination

await client.query(

'UPDATE accounts SET balance = balance + $1 WHERE id = $2',

[amount, toId]

);

// Record transaction

await client.query(

'INSERT INTO transactions (from_id, to_id, amount) VALUES ($1, $2, $3)',

[fromId, toId, amount]

);

await client.query('COMMIT');

} catch (error) {

await client.query('ROLLBACK');

throw error;

} finally {

client.release();

}

}#Kapan TIDAK Menggunakan Setiap Type

#Jangan Gunakan SQL Ketika:

- Anda perlu extreme horizontal scalability

- Data Anda adalah unstructured (document, image)

- Anda perlu sub-millisecond latency untuk simple lookup

#Jangan Gunakan NoSQL Ketika:

- Anda perlu complex join di banyak collection

- Data integrity adalah critical

- Anda perlu ACID transaction

#Jangan Gunakan Redis Ketika:

- Anda perlu persistent storage (itu in-memory)

- Dataset Anda lebih besar daripada available RAM

- Anda perlu complex query

#Jangan Gunakan Vector DB Ketika:

- Anda tidak memiliki embedding

- Anda perlu exact match (gunakan SQL)

- Data Anda tidak benefit dari similarity search

#Jangan Gunakan Search Engine Ketika:

- Anda perlu real-time consistency

- Query Anda adalah simple key lookup

- Anda memiliki small dataset

#Kesimpulan

Modern system tidak menggunakan satu database—mereka menggunakan database yang tepat untuk setiap job. PostgreSQL untuk transaction, Redis untuk caching, Elasticsearch untuk search, vector database untuk AI feature.

Mulai dengan PostgreSQL. Tambahkan Redis ketika Anda perlu speed. Tambahkan Elasticsearch ketika search menjadi penting. Tambahkan vector database ketika Anda build AI feature.

Kunci adalah memahami access pattern Anda dan memilih accordingly. Polyglot persistence bukan complexity—itu pragmatism.