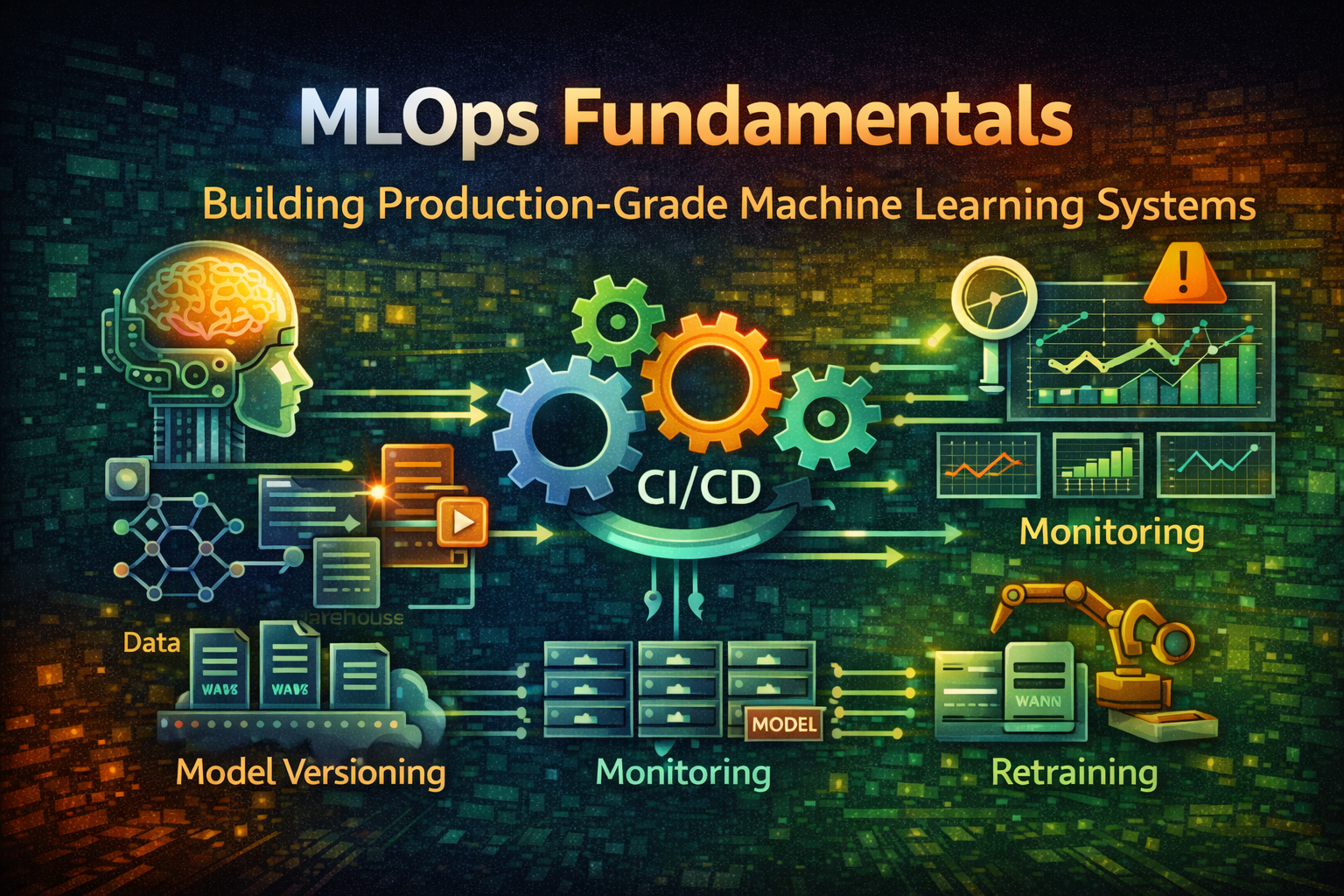

MLOps Fundamentals - Membangun Production-Grade Machine Learning System

Kuasai essentials MLOps untuk deploy, monitor, dan maintain machine learning model dalam produksi. Pelajari model versioning, CI/CD pipeline, monitoring, dan retraining strategy.

#Pengenalan

Membangun machine learning model adalah satu hal. Men-deploy ke produksi dan menjaganya bekerja reliably adalah hal yang sama sekali berbeda.

Sebagian besar organisasi dapat melatih model yang mencapai 95% accuracy dalam Jupyter notebook. Tetapi memindahkan model itu ke produksi—di mana ia harus menangani real data, scale ke jutaan prediction, dan maintain performance seiring waktu—memerlukan skillset yang sama sekali berbeda.

Di sinilah MLOps masuk. MLOps (Machine Learning Operations) menerapkan prinsip DevOps ke sistem machine learning. Ini tentang mengotomatisasi seluruh lifecycle: dari data preparation melalui model training, validation, deployment, monitoring, dan retraining.

Tanpa MLOps, Anda berakhir dengan model yang degrade secara silent, data pipeline yang break secara unexpected, dan tidak ada cara untuk debug apa yang salah. Dengan MLOps, Anda memiliki ML system yang reproducible, reliable, dan maintainable.

#ML Lifecycle vs. Software Development Lifecycle

#Traditional Software Development

Code → Build → Test → Deploy → Monitor → MaintainStage yang jelas, deterministic outcome, version control di setiap step.

#Machine Learning Development

Data → Feature Engineering → Model Training → Evaluation → Deployment → Monitoring → RetrainingLebih kompleks karena:

- Data adalah code: Perubahan data mempengaruhi perilaku model

- Non-deterministic: Code yang sama + data yang sama dapat menghasilkan model berbeda

- Continuous degradation: Model degrade saat real-world data drift

- Feedback loop: Production prediction mempengaruhi future training data

#Perbedaan MLOps

MLOps menjembatani gap ini dengan memperlakukan ML system seperti software system:

Data Pipeline → Feature Store → Model Training → Model Registry → Deployment → Monitoring → Retraining

↓ ↓ ↓ ↓ ↓ ↓ ↓

Version Version Version Version Version Metrics Automated

Control Control Control Control Control Tracking TriggersSetiap komponen di-version, di-test, dan di-monitor.

#Core MLOps Component

#1. Data Pipeline & Versioning

ML model hanya sebaik training data mereka. Data pipeline harus reproducible dan versioned.

Data Pipeline Architecture:

Raw Data Source

↓

Data Validation (Schema, Quality)

↓

Feature Engineering

↓

Feature Store (Versioned)

↓

Training Dataset (Versioned)Contoh: Data Pipeline dengan DVC (Data Version Control)

git init

dvc initTrack data file:

dvc add data/raw/training_data.csv

git add data/raw/training_data.csv.dvc .gitignore

git commit -m "Add training data v1"DVC menyimpan data dalam remote storage (S3, GCS) dan track version seperti Git:

# Lihat data history

dvc dag

# Checkout previous data version

git checkout <commit-hash>

dvc checkoutIni memastikan reproducibility: diberikan commit hash, Anda dapat recreate exact training dataset.

#2. Feature Store

Feature store adalah centralized repository untuk feature (derived data yang digunakan dalam model). Ini menyelesaikan beberapa masalah:

- Feature reuse: Multiple model menggunakan feature yang sama

- Training-serving skew: Memastikan feature di-compute identically dalam training dan production

- Feature versioning: Track feature definition seiring waktu

Feature Store Architecture:

Raw Data

↓

Feature Computation

↓

┌─────────────────────────────┐

│ Feature Store │

├─────────────────────────────┤

│ Batch Features (Historical) │

│ Real-time Features (Online) │

└─────────────────────────────┘

↓ ↓

Training Pipeline Serving PipelineContoh: Feast Feature Store

from feast import Entity, FeatureView, FeatureService

from feast.infra.offline_stores.file_source import FileSource

# Definisikan entity

user = Entity(name="user_id", join_keys=["user_id"])

# Definisikan feature view

user_features = FeatureView(

name="user_features",

entities=[user],

ttl=timedelta(days=1),

schema=[

Field(name="user_id", dtype=Int64),

Field(name="total_purchases", dtype=Float32),

Field(name="avg_order_value", dtype=Float32),

Field(name="days_since_signup", dtype=Int32),

],

source=FileSource(path="data/user_features.parquet"),

)

# Definisikan feature service

user_service = FeatureService(

name="user_service",

features=[user_features],

)Ambil feature untuk training:

from feast import FeatureStore

fs = FeatureStore(repo_path=".")

# Dapatkan historical feature untuk training

training_df = fs.get_historical_features(

entity_df=pd.read_csv("data/user_ids.csv"),

features=[

"user_features:total_purchases",

"user_features:avg_order_value",

"user_features:days_since_signup",

],

).to_df()Ambil feature untuk serving (real-time):

# Dapatkan latest feature untuk prediction

features = fs.get_online_features(

features=[

"user_features:total_purchases",

"user_features:avg_order_value",

"user_features:days_since_signup",

],

entity_rows=[{"user_id": 123}],

).to_dict()Feature yang sama, di-compute identically, untuk training dan serving.

#3. Model Training & Versioning

Model training harus reproducible dan tracked.

Training Pipeline:

import mlflow

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

# Mulai MLflow run

with mlflow.start_run():

# Log parameter

mlflow.log_param("n_estimators", 100)

mlflow.log_param("max_depth", 10)

mlflow.log_param("random_state", 42)

# Load feature

X, y = load_features()

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42

)

# Train model

model = RandomForestClassifier(

n_estimators=100,

max_depth=10,

random_state=42

)

model.fit(X_train, y_train)

# Evaluasi

train_accuracy = model.score(X_train, y_train)

test_accuracy = model.score(X_test, y_test)

# Log metric

mlflow.log_metric("train_accuracy", train_accuracy)

mlflow.log_metric("test_accuracy", test_accuracy)

# Log model

mlflow.sklearn.log_model(model, "model")MLflow track:

- Parameter (hyperparameter)

- Metric (accuracy, precision, recall)

- Artifact (model file, plot)

- Code version (Git commit)

- Data version (DVC hash)

Model Registry:

import mlflow

# Register model

model_uri = "runs:/abc123/model"

mv = mlflow.register_model(model_uri, "fraud_detection")

# Transition ke staging

client = mlflow.tracking.MlflowClient()

client.transition_model_version_stage(

name="fraud_detection",

version=1,

stage="Staging"

)

# Transition ke production

client.transition_model_version_stage(

name="fraud_detection",

version=1,

stage="Production"

)Model registry menyediakan:

- Version history

- Stage transition (Dev → Staging → Production)

- Metadata dan annotation

- Approval workflow

#4. Model Validation & Testing

Sebelum deploy, model harus pass rigorous test.

Validation Check:

import numpy as np

from sklearn.metrics import precision_recall_curve

def validate_model(model, X_test, y_test):

"""Validasi model sebelum deployment"""

# 1. Performance threshold

accuracy = model.score(X_test, y_test)

assert accuracy >= 0.90, f"Accuracy {accuracy} below threshold"

# 2. Precision/Recall balance

y_pred = model.predict(X_test)

precision, recall, _ = precision_recall_curve(y_test, y_pred)

assert precision.mean() >= 0.85, "Precision too low"

assert recall.mean() >= 0.80, "Recall too low"

# 3. Fairness check (no bias across group)

for group in ["group_a", "group_b"]:

group_mask = X_test["group"] == group

group_accuracy = model.score(X_test[group_mask], y_test[group_mask])

assert abs(group_accuracy - accuracy) < 0.05, f"Bias detected in {group}"

# 4. Prediction stability

predictions_1 = model.predict(X_test)

predictions_2 = model.predict(X_test)

assert np.array_equal(predictions_1, predictions_2), "Non-deterministic predictions"

# 5. Latency check

import time

start = time.time()

for _ in range(1000):

model.predict(X_test[:1])

latency = (time.time() - start) / 1000

assert latency < 0.1, f"Latency {latency}s exceeds threshold"

return True#5. Model Deployment

Deploy model sebagai versioned, reproducible artifact.

Deployment Architecture:

Model Registry

↓

Model Serving (REST API)

↓

┌─────────────────────────────┐

│ Load Balancer │

├─────────────────────────────┤

│ Replica 1 │ Replica 2 │ ... │

└─────────────────────────────┘

↓

Monitoring & LoggingContoh: Deploy dengan BentoML

import bentoml

from sklearn.ensemble import RandomForestClassifier

# Simpan model

model = RandomForestClassifier()

model.fit(X_train, y_train)

bentoml.sklearn.save_model("fraud_detector", model)

# Definisikan service

@bentoml.service

class FraudDetectionService:

model_ref = bentoml.sklearn.get("fraud_detector:latest")

@bentoml.api

def predict(self, features: dict) -> dict:

model = self.model_ref.model

prediction = model.predict([features.values()])

return {"fraud_probability": float(prediction[0])}Deploy:

bentoml serve fraud_detection_service:latest --productionIni membuat containerized, versioned model service siap untuk production.

#6. Model Monitoring & Observability

Model degrade dalam production. Monitoring mendeteksi issue sebelum mempengaruhi user.

Apa yang Harus Dimonitor:

┌─────────────────────────────────────────┐

│ Model Performance Metric │

├─────────────────────────────────────────┤

│ • Accuracy, Precision, Recall │

│ • Latency, Throughput │

│ • Error rate │

└─────────────────────────────────────────┘

┌─────────────────────────────────────────┐

│ Data Drift Detection │

├─────────────────────────────────────────┤

│ • Feature distribution change │

│ • Prediction distribution change │

│ • Outlier detection │

└─────────────────────────────────────────┘

┌─────────────────────────────────────────┐

│ System Metric │

├─────────────────────────────────────────┤

│ • CPU, Memory, Disk usage │

│ • Request latency, error rate │

│ • Model serving availability │

└─────────────────────────────────────────┘Contoh: Data Drift Detection

from scipy.stats import ks_2samp

import numpy as np

def detect_drift(reference_data, current_data, threshold=0.05):

"""Deteksi jika current data drift dari reference"""

for feature in reference_data.columns:

# Kolmogorov-Smirnov test

statistic, p_value = ks_2samp(

reference_data[feature],

current_data[feature]

)

if p_value < threshold:

print(f"DRIFT DETECTED: {feature} (p-value: {p_value})")

return True

return False

# Monitor dalam production

reference = load_training_data()

current = load_recent_predictions()

if detect_drift(reference, current):

# Trigger retraining

trigger_retraining_pipeline()#7. Automated Retraining

Model degrade seiring waktu. Retraining harus automated dan triggered oleh data drift atau performance degradation.

Retraining Pipeline:

Monitor Model Performance

↓

Detect Drift atau Degradation

↓

Trigger Retraining

↓

Train New Model

↓

Validate New Model

↓

A/B Test (Optional)

↓

Deploy atau RollbackContoh: Automated Retraining Trigger

import schedule

import time

def check_model_health():

"""Periksa jika model perlu retraining"""

# Dapatkan current model performance

current_accuracy = evaluate_model_on_recent_data()

baseline_accuracy = 0.90

# Periksa untuk drift

has_drift = detect_data_drift()

# Trigger retraining jika diperlukan

if current_accuracy < baseline_accuracy * 0.95 or has_drift:

print("Triggering retraining...")

trigger_retraining_job()

return True

return False

# Schedule daily check

schedule.every().day.at("02:00").do(check_model_health)

while True:

schedule.run_pending()

time.sleep(60)#MLOps Workflow: End-to-End Contoh

Berikut adalah complete MLOps workflow:

1. Data Preparation

├── Collect raw data

├── Version dengan DVC

└── Validate schema & quality

2. Feature Engineering

├── Compute feature

├── Store dalam Feature Store

└── Version feature definition

3. Model Training

├── Load feature dari Feature Store

├── Train model dengan MLflow

├── Log parameter, metric, artifact

└── Register model dalam Model Registry

4. Model Validation

├── Performance test

├── Fairness check

├── Latency test

└── Approve untuk deployment

5. Deployment

├── Build container image

├── Deploy ke staging

├── Run smoke test

├── Deploy ke production

└── Monitor health

6. Monitoring

├── Track prediction

├── Detect data drift

├── Monitor performance metric

└── Alert pada anomali

7. Retraining

├── Detect drift atau degradation

├── Trigger retraining pipeline

├── Validate new model

└── Deploy atau rollback#MLOps Tools Landscape

#Experiment Tracking & Model Registry

- MLflow: Open-source, language-agnostic

- Weights & Biases: Cloud-based, collaborative

- Neptune: Experiment tracking dan model registry

- Kubeflow: Kubernetes-native ML workflow

#Feature Store

- Feast: Open-source, multi-cloud

- Tecton: Enterprise feature platform

- Hopsworks: Feature store dengan governance

- Databricks Feature Store: Integrated dengan Databricks

#Model Serving

- BentoML: Python-first model serving

- KServe: Kubernetes-native model serving

- Seldon Core: Model serving pada Kubernetes

- Ray Serve: Distributed model serving

#Monitoring & Observability

- Evidently: Data dan model drift detection

- Arize: ML observability platform

- Fiddler: Model monitoring dan explainability

- WhyLabs: Data dan model monitoring

#Orchestration

- Airflow: Workflow orchestration

- Prefect: Modern workflow orchestration

- Dagster: Data orchestration

- Kubeflow Pipeline: ML-specific orchestration

#Kesalahan & Jebakan Umum

#Kesalahan 1: Tidak Ada Version Control untuk Data & Model

Masalahnya: Tidak dapat reproduce past result atau debug issue.

Mengapa terjadi: Tim fokus pada code versioning, lupa tentang data.

Cara menghindarinya:

- Gunakan DVC untuk data versioning

- Gunakan MLflow untuk model versioning

- Track data lineage

- Document data transformation

#Kesalahan 2: Training-Serving Skew

Masalahnya: Model perform baik dalam training tetapi buruk dalam production.

Mengapa terjadi: Feature di-compute berbeda dalam training vs. serving.

Cara menghindarinya:

- Gunakan feature store

- Compute feature identically dalam kedua pipeline

- Test serving pipeline sebelum deployment

- Monitor prediction distribution

#Kesalahan 3: Tidak Ada Model Validation

Masalahnya: Bad model di-deploy ke production.

Mengapa terjadi: Rushing untuk deploy tanpa thorough testing.

Cara menghindarinya:

- Implementasikan automated validation check

- Test untuk fairness dan bias

- Validate latency dan throughput

- Require manual approval sebelum production

#Kesalahan 4: Mengabaikan Data Drift

Masalahnya: Model performance degrade secara silent.

Mengapa terjadi: Tidak ada monitoring atau drift detection.

Cara menghindarinya:

- Monitor feature distribution

- Detect prediction drift

- Setup automated alert

- Trigger retraining pada drift

#Kesalahan 5: Manual Retraining

Masalahnya: Model menjadi stale, performance degrade.

Mengapa terjadi: Retraining manual dan infrequent.

Cara menghindarinya:

- Automate retraining pipeline

- Trigger pada drift atau performance degradation

- Gunakan scheduled retraining sebagai fallback

- Test new model sebelum deployment

#Kesalahan 6: Lack of Reproducibility

Masalahnya: Tidak dapat recreate past result atau debug issue.

Mengapa terjadi: Random seed tidak set, dependency tidak pinned.

Cara menghindarinya:

- Set random seed di mana-mana

- Pin dependency version

- Document environment setup

- Gunakan container untuk reproducibility

#Best Practice untuk Production ML

#1. Perlakukan ML Seperti Software

ML Code + Data + Config → Reproducible ModelVersion semuanya: code, data, hyperparameter, environment.

#2. Automate Semuanya

Data Pipeline → Training → Validation → Deployment → Monitoring → RetrainingManual step rawan kesalahan dan tidak scale.

#3. Monitor Continuously

Model Performance + Data Drift + System Metric → AlertTangkap issue sebelum mempengaruhi user.

#4. Test Thoroughly

Unit Test → Integration Test → Validation Test → A/B TestSetiap layer menangkap issue berbeda.

#5. Document Decision

Mengapa model ini? Mengapa feature ini? Mengapa threshold ini?Future Anda akan berterima kasih kepada present Anda.

#6. Plan untuk Failure

Canary Deployment → A/B Test → Rollback StrategyAsumsikan sesuatu akan salah. Miliki rencana.

#Kapan Implementasikan MLOps

Mulai sederhana:

- Single model, manual deployment

- Basic monitoring

- Manual retraining

Tambahkan kompleksitas secara bertahap:

- Multiple model

- Automated deployment

- Data drift detection

- Automated retraining

Full MLOps:

- Banyak model

- Complex pipeline

- Comprehensive monitoring

- Self-healing system

Jangan over-engineer awal. Mulai dengan minimum viable MLOps setup dan evolve saat system Anda berkembang.

#Kesimpulan

MLOps adalah tentang membawa software engineering discipline ke machine learning. Ini bukan hanya tentang deploy model—ini tentang membangun reliable, maintainable, dan scalable ML system.

Komponen kunci:

- Data versioning: Reproducible training dataset

- Feature store: Consistent feature di seluruh pipeline

- Model versioning: Track model history dan lineage

- Automated validation: Tangkap issue sebelum production

- Continuous monitoring: Detect drift dan degradation

- Automated retraining: Jaga model tetap fresh

Mengimplementasikan MLOps memerlukan investasi upfront, tetapi membayar dividen dalam reliability, maintainability, dan team velocity. Mulai dengan pilot project, establish best practice, dan scale secara bertahap.

Perbedaan antara model yang bekerja dan model yang bekerja reliably dalam production adalah MLOps.